UCT

Home * Search * Monte-Carlo Tree Search * UCT

UCT (Upper Confidence bounds applied to Trees),

a popular algorithm that deals with the flaw of Monte-Carlo Tree Search, when a program may favor a losing move with only one or a few forced refutations, but due to the vast majority of other moves provides a better random playout score than other, better moves. UCT was introduced by Levente Kocsis and Csaba Szepesvári in 2006 [1], which accelerated the Monte-Carlo revolution in computer Go [2] and games difficult to evaluate statically. If given infinite time and memory, UCT theoretically converges to Minimax.

Selection

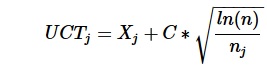

In UCT, upper confidence bounds (UCB1) guide the selection of a node [3], treating selection as a multi-armed bandit problem, where the crucial tradeoff the gambler faces at each trial is between exploration and exploitation - exploitation of the slot machine that has the highest expected payoff and exploration to get more information about the expected payoffs of the other machines. Child node j is selected which maximizes the UCT Evaluation:

where:

- Xj is the win ratio of the child

- n is the number of times the parent has been visited

- nj is the number of times the child has been visited

- C is a constant to adjust the amount of exploration and incorporates the sqrt(2) from the UCB1 formula

The first component of the UCB1 formula above corresponds to exploitation, as it is high for moves with high average win ratio. The second component corresponds to exploration, since it is high for moves with few simulations.

RAVE

Most contemporary implementations of MCTS are based on some variant of UCT [4]. Modifications have been proposed, with the aim of shortening the time to find good moves. They can be divided into improvements based on expert knowledge and into domain-independent improvements in the playouts, and in building the tree in modifying the exploitation part of the UCB1 formula, for instance in Rapid Action Value Estimation (RAVE) [5] considering transpositions.

PUCT

Chris Rosin's PUCT modifies the original UCB1 multi-armed bandit policy by approximately predicting good arms at the start of a sequence of multi-armed bandit trials ('Predictor' + UCB = PUCB) [6]. A variation of PUCT was used in the AlphaGo and AlphaZero projects [7] , and subsequently also in Leela Zero and Leela Chess Zero [8].

Quotes

Gian-Carlo Pascutto

Quote by Gian-Carlo Pascutto in 2010 [9]:

There is no significant difference between an alpha-beta search with heavy LMR and a static evaluator (current state of the art in chess) and an UCT searcher with a small exploration constant that does playouts (state of the art in go).

The shape of the tree they search is very similar. The main breakthrough in Go the last few years was how to backup an uncertain Monte Carlo score. This was solved. For chess this same problem was solved around the time quiescent search was developed.

Both are producing strong programs and we've proven for both the methods that they scale in strength as hardware speed goes up.

So I would say that we've successfully adopted the simple, brute force methods for chess to Go and they already work without increases in computer speed. The increases will make them progressively stronger though, and with further software tweaks they will eventually surpass humans.

Raghuram Ramanujan et al.

Quote by Raghuram Ramanujan, Ashish Sabharwal, and Bart Selman from their abstract On Adversarial Search Spaces and Sampling-Based Planning [10]:

UCT has been shown to outperform traditional minimax based approaches in several challenging domains such as Go and KriegSpiel, although minimax search still prevails in other domains such as Chess. This work provides insights into the properties of adversarial search spaces that play a key role in the success or failure of UCT and similar sampling-based approaches. We show that certain "early loss" or "shallow trap" configurations, while unlikely in Go, occur surprisingly often in games like Chess (even in grandmaster games). We provide evidence that UCT, unlike minimax search, is unable to identify such traps in Chess and spends a great deal of time exploring much deeper game play than needed.

See also

Publications

2000 ...

- Peter Auer, Nicolò Cesa-Bianchi, Paul Fischer (2002). Finite-time Analysis of the Multiarmed Bandit Problem. Machine Learning, Vol. 47, No. 2, pdf

2005 ...

2006

- Levente Kocsis, Csaba Szepesvári (2006). Bandit based Monte-Carlo Planning. ECML-06, LNCS/LNAI 4212, pdf

- Levente Kocsis, Csaba Szepesvári, Jan Willemson (2006). Improved Monte-Carlo Search. pdf

- Sylvain Gelly, Yizao Wang (2006). Exploration exploitation in Go: UCT for Monte-Carlo Go. pdf

- Sylvain Gelly, Yizao Wang, Rémi Munos, Olivier Teytaud (2006). Modification of UCT with Patterns in Monte-Carlo Go. INRIA

2007

- Yizao Wang, Sylvain Gelly (2007). Modifications of UCT and Sequence-Like Simulations for Monte-Carlo Go. IEEE Symposium on Computational Intelligence and Games, Honolulu, USA, 2007, pdf

- Shugo Nakamura, Makoto Miwa, Takashi Chikayama (2007). Improvement of UCT using evaluation function. 12th Game Programming Workshop 2007

- Tristan Cazenave, Nicolas Jouandeau (2007). On the Parallelization of UCT. CGW 2007, pdf » Parallel Search

- Jean-Yves Audibert, Rémi Munos, Csaba Szepesvári (2007). Tuning Bandit Algorithms in Stochastic Environments. pdf

2008

- Nathan Sturtevant (2008). An Analysis of UCT in Multi-player Games. CG 2008

- Guillaume Chaslot, Mark Winands, Jaap van den Herik (2008). Parallel Monte-Carlo Tree Search. CG 2008, pdf

2009

- Rémi Coulom (2009). The Monte-Carlo Revolution in Go. JFFoS'2008: Japanese-French Frontiers of Science Symposium, slides as pdf

- Fabien Teytaud, Olivier Teytaud (2009). Creating an Upper-Confidence-Tree program for Havannah. Advances in Computer Games 12, inria-00380539 as pdf » Havannah

2010 ...

- Jean Méhat, Tristan Cazenave (2010). Combining UCT and Nested Monte-Carlo Search for Single-Player General Game Playing. IEEE Transactions on Computational Intelligence and AI in Games, Vol. 2, No. 4, pdf 2009 » General Game Playing

- Raghuram Ramanujan, Ashish Sabharwal, Bart Selman (2010). On Adversarial Search Spaces and Sampling-Based Planning. ICAPS 2010 [11]

- Damien Pellier, Bruno Bouzy, Marc Métivier (2010). An UCT Approach for Anytime Agent-based Planning. PAAMS'10, pdf

- Thomas J. Walsh, Sergiu Goschin, Michael L. Littman (2010). Integrating sample-based planning and model-based reinforcement learning. AAAI, pdf » Reinforcement Learning

- Takayuki Yajima, Tsuyoshi Hashimoto, Toshiki Matsui, Junichi Hashimoto, Kristian Spoerer (2010). Node-Expansion Operators for the UCT Algorithm. CG 2010

2011

- Junichi Hashimoto, Akihiro Kishimoto, Kazuki Yoshizoe, Kokolo Ikeda (2011). Accelerated UCT and Its Application to Two-Player Games. Advances in Computer Games 13

- Jeff Rollason (2011). Mixing MCTS with Conventional Static Evaluation: Experiments and shortcuts en-route to full UCT. AI Factory, Winter 2011 » Evaluation

- Sylvain Gelly, David Silver (2011). Monte-Carlo tree search and rapid action value estimation in computer Go. Artificial Intelligence, Vol. 175, No. 11, preprint as pdf

- Lars Schaefers, Marco Platzner, Ulf Lorenz (2011). UCT-Treesplit - Parallel MCTS on Distributed Memory. ICAPS 2011, pdf » Parallel Search

- Raghuram Ramanujan, Bart Selman (2011). Trade-Offs in Sampling-Based Adversarial Planning. ICAPS 2011

- Christopher D. Rosin (2011). Multi-armed bandits with episode context. Annals of Mathematics and Artificial Intelligence, Vol. 61, No. 3, ISAIM 2010 pdf » PUCT

- Adrien Couëtoux, Jean-Baptiste Hoock, Nataliya Sokolovska, Olivier Teytaud, Nicolas Bonnard (2011). Continuous Upper Confidence Trees. LION 2011, pdf

- David Tolpin, Solomon Eyal Shimony (2011). Doing Better Than UCT: Rational Monte Carlo Sampling in Trees. arXiv:1108.3711

2012

- Oleg Arenz (2012). Monte Carlo Chess. B.Sc. thesis, Darmstadt University of Technology, advisor Johannes Fürnkranz, pdf » Stockfish

- Cameron Browne, Simon Lucas, et al. (2012). A Survey of Monte Carlo Tree Search Methods. IEEE Transactions on Computational Intelligence and AI in Games, Vol. 4, pdf

- Sylvain Gelly, Marc Schoenauer, Michèle Sebag, Olivier Teytaud, Levente Kocsis, David Silver, Csaba Szepesvári (2012). The Grand Challenge of Computer Go: Monte Carlo Tree Search and Extensions. Communications of the ACM, Vol. 55, No. 3, pdf preprint

- Adrien Couetoux, Olivier Teytaud, Hassen Doghmen (2012). Learning a Move-Generator for Upper Confidence Trees. ICS 2012, Hualien, Taiwan, December 2012 » Learning, Move Generation

- Ashish Sabharwal, Horst Samulowitz, Chandra Reddy (2012). Guiding Combinatorial Optimization with UCT. CPAIOR 2012, abstract as pdf, draft as pdf

- Raghuram Ramanujan, Ashish Sabharwal, Bart Selman (2012). Understanding Sampling Style Adversarial Search Methods. arXiv:1203.4011

- Cheng-Wei Chou, Ping-Chiang Chou, Chang-Shing Lee, David L. Saint-Pierre, Olivier Teytaud, Mei-Hui Wang, Li-Wen Wu, Shi-Jim Yen (2012). Strategic Choices: Small Budgets and Simple Regret. TAAI 2012, Excellent Paper Award, pdf

- Arthur Guez, David Silver, Peter Dayan (2012). Efficient Bayes-Adaptive Reinforcement Learning using Sample-Based Search. arXiv:1205.3109

- Truong-Huy Dinh Nguyen, Wee Sun Lee, Tze-Yun Leong (2012). Bootstrapping Monte Carlo Tree Search with an Imperfect Heuristic. arXiv:1206.5940

- David Tolpin, Solomon Eyal Shimony (2012). MCTS Based on Simple Regret. AAAI-2012, arXiv:1207.5536

2013

- Simon Viennot, Kokolo Ikeda (2013). Efficiency of Static Knowledge Bias in Monte-Carlo Tree Search. CG 2013

- Rui Li, Yueqiu Wu, Andi Zhang, Chen Ma, Bo Chen, Shuliang Wang (2013). Technique Analysis and Designing of Program with UCT Algorithm for NoGo. CCDC2013

- Ari Weinstein, Michael L. Littman, Sergiu Goschin (2013). Rollout-based Game-tree Search Outprunes Traditional Alpha-beta. PMLR, Vol. 24 » FSSS-Minimax, MCTS

2014

- Rémi Munos (2014). From Bandits to Monte-Carlo Tree Search: The Optimistic Principle Applied to Optimization and Planning. Foundations and Trends in Machine Learning, Vol. 7, No 1, hal-00747575v5, slides as pdf

- David W. King (2014). Complexity, Heuristic, and Search Analysis for the Games of Crossings and Epaminondas. Masters thesis, Air Force Institute of Technology, pdf [12]

- David W. King, Gilbert L. Peterson (2014). Epaminondas: Exploring Combat Tactics. ICGA Journal, Vol. 37, No. 3 [13]

- Truong-Huy Dinh Nguyen, Tomi Silander, Wee Sun Lee, Tze-Yun Leong (2014). Bootstrapping Simulation-Based Algorithms with a Suboptimal Policy. ICAPS 2014, YouTube Video

2015 ...

- Xi Liang, Ting-Han Wei, I-Chen Wu (2015). Job-level UCT search for solving Hex. CIG 2015

- Yusaku Mandai, Tomoyuki Kaneko (2015). LinUCB Applied to Monte Carlo Tree Search. Advances in Computer Games 14

- Yun-Ching Liu, Yoshimasa Tsuruoka (2015). Adapting Improved Upper Confidence Bounds for Monte-Carlo Tree Search. Advances in Computer Games 14

- S. Ali Mirsoleimani, Aske Plaat, Jaap van den Herik (2015). Ensemble UCT Needs High Exploitation. CoRR abs/1509.08434

- Johannes Heinrich, David Silver (2015). Smooth UCT Search in Computer Poker. IJCAI 2015, pdf

- Xi Liang, Ting-Han Wei, I-Chen Wu (2015). Solving Hex Openings Using Job-Level UCT Search. ICGA Journal, Vol. 38, No. 3

- Naoki Mizukami, Jun Suzuki, Hirotaka Kameko, Yoshimasa Tsuruoka (2017). Exploration Bonuses Based on Upper Confidence Bounds for Sparse Reward Games. Advances in Computer Games 15

- Jacek Mańdziuk (2018). MCTS/UCT in Solving Real-Life Problems. Advances in Data Analysis with Computational Intelligence Methods, Springer

- Kiminori Matsuzaki (2018). Empirical Analysis of PUCT Algorithm with Evaluation Functions of Different Quality. TAAI 2018

Forum Posts

- Re: Chess vs Go // AI vs IA by Gian-Carlo Pascutto, June 02, 2010

- Monte Carlo (upper confidence bounds applied to trees) by ChessGO, Computer Go, October 22, 2010

- UCT surprise for checkers ! by Daniel Shawul, CCC, March 25, 2011

- uct on gpu by Daniel Shawul, CCC, February 24, 2012 » GPU

- My new book by Daniel Shawul, CCC, January 02, 2014 » Opening Book

- Nebiyu-MCTS vs TSCP 1-0 by Daniel Shawul, CCC, December 10, 2017 » Nebiyu

- Search traps in MCTS and chess by Daniel Shawul, CCC, December 25, 2017 » Sampling-Based Planning

- MCTS weakness wrt AB (via Daniel Shawul) by Chris Whittington, Rybka Forum, December 25, 2017

- Announcing lczero by Gary, CCC, January 09, 2018 » Leela Chess Zero

External Links

- Exploration and exploitation - in Monte Carlo tree search from Wikipedia

- Confidence interval from Wikipedia

- Multi-armed bandit from Wikipedia

- GitHub - theKGS/MCTS: Java implementation of UCT based MCTS and Flat MCTS

- Sensei's Library: UCT

- Lange Nacht der Wissenschaften - Long Night of Sciences Jena - 2007 by Ingo Althöfer, MC and UCT poster by Jakob Erdmann

- Weather Report - Boogie Woogie Waltz, 1974, YouTube Video

References

- ↑ Levente Kocsis, Csaba Szepesvári (2006). Bandit based Monte-Carlo Planning ECML-06, LNCS/LNAI 4212, pdf

- ↑ Sylvain Gelly, Marc Schoenauer, Michèle Sebag, Olivier Teytaud, Levente Kocsis, David Silver, Csaba Szepesvári (2012). The Grand Challenge of Computer Go: Monte Carlo Tree Search and Extensions. Communications of the ACM, Vol. 55, No. 3, pdf preprint

- ↑ see UCB1 in Peter Auer, Nicolò Cesa-Bianchi, Paul Fischer (2002). Finite-time Analysis of the Multiarmed Bandit Problem. Machine Learning, Vol. 47, No. 2

- ↑ Exploration and exploitation - in Monte Carlo tree search from Wikipedia

- ↑ Sylvain Gelly, David Silver (2011). Monte-Carlo tree search and rapid action value estimation in computer Go. Artificial Intelligence, Vol. 175, No. 11, preprint as pdf

- ↑ Christopher D. Rosin (2011). Multi-armed bandits with episode context. Annals of Mathematics and Artificial Intelligence, Vol. 61, No. 3, ISAIM 2010 pdf

- ↑ David Silver, Aja Huang, Chris J. Maddison, Arthur Guez, Laurent Sifre, George van den Driessche, Julian Schrittwieser, Ioannis Antonoglou, Veda Panneershelvam, Marc Lanctot, Sander Dieleman, Dominik Grewe, John Nham, Nal Kalchbrenner, Ilya Sutskever, Timothy Lillicrap, Madeleine Leach, Koray Kavukcuoglu, Thore Graepel, Demis Hassabis (2016). Mastering the game of Go with deep neural networks and tree search. Nature, Vol. 529

- ↑ FAQ · LeelaChessZero/lc0 Wiki · GitHub

- ↑ Re: Chess vs Go // AI vs IA by Gian-Carlo Pascutto, June 02, 2010

- ↑ Raghuram Ramanujan, Ashish Sabharwal, Bart Selman (2010). On Adversarial Search Spaces and Sampling-Based Planning. ICAPS 2010

- ↑ Search traps in MCTS and chess by Daniel Shawul, CCC, December 25, 2017

- ↑ Crossings from Wikipedia

- ↑ Epaminondas from Wikipedia