Difference between revisions of "Supervised Learning"

GerdIsenberg (talk | contribs) |

GerdIsenberg (talk | contribs) |

||

| (3 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

'''[[Main Page|Home]] * [[Learning]] * Supervised Learning''' | '''[[Main Page|Home]] * [[Learning]] * Supervised Learning''' | ||

| − | '''Supervised Learning''',<br/> | + | '''Supervised Learning''', (SL)<br/> |

is learning from examples provided by a knowledgable external [https://en.wikipedia.org/wiki/Supervisor supervisor]. | is learning from examples provided by a knowledgable external [https://en.wikipedia.org/wiki/Supervisor supervisor]. | ||

| − | In machine learning, supervised learning is a technique for deducing a function from [https://en.wikipedia.org/wiki/Training,_validation,_and_test_sets training data]. The training data consist of pairs of input objects and desired outputs <ref>[https://en.wikipedia.org/wiki/Supervised_learning Supervised learning from Wikipedia]</ref> | + | In machine learning, supervised learning is a technique for deducing a function from [https://en.wikipedia.org/wiki/Training,_validation,_and_test_sets training data]. The training data consist of pairs of input objects and desired outputs. After parameter adjustment and learning, the performance of the resulting function should be measured on a test set that is separate from the training set <ref>[https://en.wikipedia.org/wiki/Supervised_learning Supervised learning from Wikipedia]</ref>. |

| − | =Move Adaption= | + | =SL in a nutshell= |

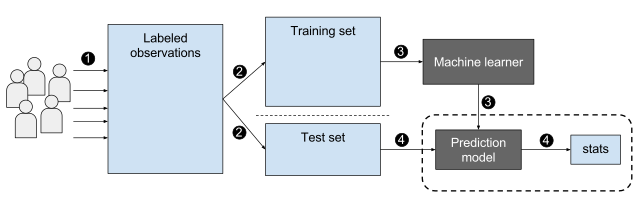

| + | [[FILE:Supervised machine learning in a nutshell.svg|640px|none|border|text-bottom]] | ||

| + | <ref>A data flow diagram shows the machine learning process in summary, by [https://en.wikipedia.org/wiki/User:EpochFail EpochFail], November 15, 2015, [https://en.wikipedia.org/wiki/Wikimedia_Commons Wikimedia Commons]</ref> | ||

| + | |||

| + | =SL in Chess= | ||

| + | In computer games and chess, supervised learning techniques were used in [[Automated Tuning|automated tuning]] or to train [[Neural Networks|neural network]] game and chess programs. Input objects are [[Chess Position|chess positions]]. The desired output is either the supervisor's move choice in that position ([[Automated Tuning#MoveAdaption|move adaption]]), or a [[Score|score]] provided by an [[Oracle|oracle]] ([[Automated Tuning#ValueAdaption|value adaption]]). | ||

| + | |||

| + | ==Move Adaption== | ||

[[Automated Tuning#MoveAdaption|Move adaption]] can be applied by [[Automated Tuning#LinearRegression|linear regression]] to minimize a [https://en.wikipedia.org/wiki/Loss_function cost function] considering the rank-number of the desired move in a [[Move List|move list]] ordered by score <ref>[[Tony Marsland]] ('''1985'''). ''Evaluation-Function Factors''. [[ICGA Journal#8_2|ICCA Journal, Vol. 8, No. 2]], [http://webdocs.cs.ualberta.ca/~tony/OldPapers/evaluation.pdf pdf]</ref>. | [[Automated Tuning#MoveAdaption|Move adaption]] can be applied by [[Automated Tuning#LinearRegression|linear regression]] to minimize a [https://en.wikipedia.org/wiki/Loss_function cost function] considering the rank-number of the desired move in a [[Move List|move list]] ordered by score <ref>[[Tony Marsland]] ('''1985'''). ''Evaluation-Function Factors''. [[ICGA Journal#8_2|ICCA Journal, Vol. 8, No. 2]], [http://webdocs.cs.ualberta.ca/~tony/OldPapers/evaluation.pdf pdf]</ref>. | ||

| − | =Value Adaption= | + | ==Value Adaption== |

One common idea to provide an [[Oracle|oracle]] for supervised [[Automated Tuning#ValueAdaption|value adaption]] is to use the win/draw/loss outcome from finished games | One common idea to provide an [[Oracle|oracle]] for supervised [[Automated Tuning#ValueAdaption|value adaption]] is to use the win/draw/loss outcome from finished games | ||

for all training positions selected from that game. Discrete {-1, 0, +1} or {0, ½, 1} desired values are the domain of [[Automated Tuning#LogisticRegression|logistic regression]] and require the | for all training positions selected from that game. Discrete {-1, 0, +1} or {0, ½, 1} desired values are the domain of [[Automated Tuning#LogisticRegression|logistic regression]] and require the | ||

| Line 40: | Line 47: | ||

* [[Michael Buro]] ('''2002'''). ''Improving Mini-max Search by Supervised Learning.'' [https://en.wikipedia.org/wiki/Artificial_Intelligence_(journal) Artificial Intelligence], Vol. 134, No. 1, [http://www.cs.ualberta.ca/%7Emburo/ps/logaij.pdf pdf] | * [[Michael Buro]] ('''2002'''). ''Improving Mini-max Search by Supervised Learning.'' [https://en.wikipedia.org/wiki/Artificial_Intelligence_(journal) Artificial Intelligence], Vol. 134, No. 1, [http://www.cs.ualberta.ca/%7Emburo/ps/logaij.pdf pdf] | ||

* [[Dave Gomboc]], [[Michael Buro]], [[Tony Marsland]] ('''2005'''). ''Tuning Evaluation Functions by Maximizing Concordance''. [https://en.wikipedia.org/wiki/Theoretical_Computer_Science_%28journal%29 Theoretical Computer Science], Vol. 349, No. 2, [http://www.cs.ualberta.ca/%7Emburo/ps/tcs-learn.pdf pdf] | * [[Dave Gomboc]], [[Michael Buro]], [[Tony Marsland]] ('''2005'''). ''Tuning Evaluation Functions by Maximizing Concordance''. [https://en.wikipedia.org/wiki/Theoretical_Computer_Science_%28journal%29 Theoretical Computer Science], Vol. 349, No. 2, [http://www.cs.ualberta.ca/%7Emburo/ps/tcs-learn.pdf pdf] | ||

| + | * [[Amos Storkey]], [https://www.k.u-tokyo.ac.jp/pros-e/person/masashi_sugiyama/masashi_sugiyama.htm Masashi Sugiyama] ('''2006'''). ''[http://papers.neurips.cc/paper/3019-mixture-regression-for-covariate-shift Mixture Regression for Covariate Shift]''. [https://dblp.uni-trier.de/db/conf/nips/nips2006.html NIPS 2006] | ||

| + | * [[Eli David|Omid David]], [[Moshe Koppel]], [[Nathan S. Netanyahu]] ('''2008'''). ''Genetic Algorithms for Mentor-Assisted Evaluation Function Optimization''. [http://www.sigevo.org/gecco-2008/ GECCO '08], [https://arxiv.org/abs/1711.06839 arXiv:1711.06839] | ||

| + | * [[Eli David|Omid David]], [[Jaap van den Herik]], [[Moshe Koppel]], [[Nathan S. Netanyahu]] ('''2009'''). ''Simulating Human Grandmasters: Evolution and Coevolution of Evaluation Functions''. [http://www.sigevo.org/gecco-2009/ GECCO '09], [https://arxiv.org/abs/1711.06840 arXiv:1711.06840] | ||

==2010 ...== | ==2010 ...== | ||

* [[Tor Lattimore]], [[Marcus Hutter]] ('''2011'''). ''No Free Lunch versus Occam's Razor in Supervised Learning''. [https://en.wikipedia.org/wiki/Ray_Solomonoff Solomonoff] Memorial, [https://en.wikipedia.org/wiki/Lecture_Notes_in_Computer_Science Lecture Notes in Computer Science], [https://en.wikipedia.org/wiki/Springer-Verlag Springer], [https://arxiv.org/abs/1111.3846 arXiv:1111.3846] <ref>[https://en.wikipedia.org/wiki/No_free_lunch_in_search_and_optimization No free lunch in search and optimization - Wikipedia]</ref> <ref>[https://en.wikipedia.org/wiki/Occam%27s_razor Occam's razor from Wikipedia]</ref> | * [[Tor Lattimore]], [[Marcus Hutter]] ('''2011'''). ''No Free Lunch versus Occam's Razor in Supervised Learning''. [https://en.wikipedia.org/wiki/Ray_Solomonoff Solomonoff] Memorial, [https://en.wikipedia.org/wiki/Lecture_Notes_in_Computer_Science Lecture Notes in Computer Science], [https://en.wikipedia.org/wiki/Springer-Verlag Springer], [https://arxiv.org/abs/1111.3846 arXiv:1111.3846] <ref>[https://en.wikipedia.org/wiki/No_free_lunch_in_search_and_optimization No free lunch in search and optimization - Wikipedia]</ref> <ref>[https://en.wikipedia.org/wiki/Occam%27s_razor Occam's razor from Wikipedia]</ref> | ||

| Line 46: | Line 56: | ||

* [[Christopher Clark]], [[Amos Storkey]] ('''2014'''). ''Teaching Deep Convolutional Neural Networks to Play Go''. [http://arxiv.org/abs/1412.3409 arXiv:1412.3409] | * [[Christopher Clark]], [[Amos Storkey]] ('''2014'''). ''Teaching Deep Convolutional Neural Networks to Play Go''. [http://arxiv.org/abs/1412.3409 arXiv:1412.3409] | ||

* [[Wen-Jie Tseng]], [[Jr-Chang Chen]], [[I-Chen Wu]], [[Tinghan Wei]] ('''2018'''). ''Comparison Training for Computer Chinese Chess''. [https://arxiv.org/abs/1801.07411 arXiv:1801.07411] | * [[Wen-Jie Tseng]], [[Jr-Chang Chen]], [[I-Chen Wu]], [[Tinghan Wei]] ('''2018'''). ''Comparison Training for Computer Chinese Chess''. [https://arxiv.org/abs/1801.07411 arXiv:1801.07411] | ||

| + | ==2020 ...== | ||

| + | * [[Johannes Czech]], [[Moritz Willig]], [[Alena Beyer]], [[Kristian Kersting]], [[Johannes Fürnkranz]] ('''2020'''). ''[https://www.frontiersin.org/articles/10.3389/frai.2020.00024/full Learning to Play the Chess Variant Crazyhouse Above World Champion Level With Deep Neural Networks and Human Data]''. [https://www.frontiersin.org/journals/artificial-intelligence# Frontiers in Artificial Intelligence] » [[CrazyAra]] | ||

=Forum Posts= | =Forum Posts= | ||

Latest revision as of 11:07, 22 May 2021

Home * Learning * Supervised Learning

Supervised Learning, (SL)

is learning from examples provided by a knowledgable external supervisor.

In machine learning, supervised learning is a technique for deducing a function from training data. The training data consist of pairs of input objects and desired outputs. After parameter adjustment and learning, the performance of the resulting function should be measured on a test set that is separate from the training set [1].

Contents

SL in a nutshell

SL in Chess

In computer games and chess, supervised learning techniques were used in automated tuning or to train neural network game and chess programs. Input objects are chess positions. The desired output is either the supervisor's move choice in that position (move adaption), or a score provided by an oracle (value adaption).

Move Adaption

Move adaption can be applied by linear regression to minimize a cost function considering the rank-number of the desired move in a move list ordered by score [3].

Value Adaption

One common idea to provide an oracle for supervised value adaption is to use the win/draw/loss outcome from finished games for all training positions selected from that game. Discrete {-1, 0, +1} or {0, ½, 1} desired values are the domain of logistic regression and require the evaluation scores mapped from pawn advantage to appropriate winning probabilities using the sigmoid function to calculate a mean squared error of the cost function to minimize, as demonstrated by Texel's Tuning Method.

See also

- Supervised Learning in Automated Tuning

- Book Learning

- Chessmaps Heuristic

- CHREST

- Deep Learning

- Neural Networks

- Planning

- Reinforcement Learning

- Temporal Difference Learning

Selected Publications

1960 ....

- Arthur Samuel (1967). Some Studies in Machine Learning. Using the Game of Checkers. II-Recent Progress. pdf

1980 ...

- Thomas Nitsche (1982). A Learning Chess Program. Advances in Computer Chess 3

- Tony Marsland (1985). Evaluation-Function Factors. ICCA Journal, Vol. 8, No. 2, pdf

- Eric B. Baum, Frank Wilczek (1987). Supervised Learning of Probability Distributions by Neural Networks. NIPS 1987

- Maarten van der Meulen (1989). Weight Assessment in Evaluation Functions. Advances in Computer Chess 5

1990 ...

- Michèle Sebag (1990). A symbolic-numerical approach for supervised learning from examples and rules. Ph.D. thesis, Paris Dauphine University

- Feng-hsiung Hsu, Thomas Anantharaman, Murray Campbell, Andreas Nowatzyk (1990). A Grandmaster Chess Machine. Scientific American, Vol. 263, No. 4

- Thomas Anantharaman (1997). Evaluation Tuning for Computer Chess: Linear Discriminant Methods. ICCA Journal, Vol. 20, No. 4

2000 ...

- Michael Buro (2002). Improving Mini-max Search by Supervised Learning. Artificial Intelligence, Vol. 134, No. 1, pdf

- Dave Gomboc, Michael Buro, Tony Marsland (2005). Tuning Evaluation Functions by Maximizing Concordance. Theoretical Computer Science, Vol. 349, No. 2, pdf

- Amos Storkey, Masashi Sugiyama (2006). Mixture Regression for Covariate Shift. NIPS 2006

- Omid David, Moshe Koppel, Nathan S. Netanyahu (2008). Genetic Algorithms for Mentor-Assisted Evaluation Function Optimization. GECCO '08, arXiv:1711.06839

- Omid David, Jaap van den Herik, Moshe Koppel, Nathan S. Netanyahu (2009). Simulating Human Grandmasters: Evolution and Coevolution of Evaluation Functions. GECCO '09, arXiv:1711.06840

2010 ...

- Tor Lattimore, Marcus Hutter (2011). No Free Lunch versus Occam's Razor in Supervised Learning. Solomonoff Memorial, Lecture Notes in Computer Science, Springer, arXiv:1111.3846 [4] [5]

- Wen-Jie Tseng, Jr-Chang Chen, I-Chen Wu, Ching-Hua Kuo, Bo-Han Lin (2013). A Supervised Learning Method for Chinese Chess Programs. JSAI2013

- Kunihito Hoki, Tomoyuki Kaneko (2014). Large-Scale Optimization for Evaluation Functions with Minimax Search. JAIR Vol. 49, pdf

- Christopher Clark, Amos Storkey (2014). Teaching Deep Convolutional Neural Networks to Play Go. arXiv:1412.3409

- Wen-Jie Tseng, Jr-Chang Chen, I-Chen Wu, Tinghan Wei (2018). Comparison Training for Computer Chinese Chess. arXiv:1801.07411

2020 ...

- Johannes Czech, Moritz Willig, Alena Beyer, Kristian Kersting, Johannes Fürnkranz (2020). Learning to Play the Chess Variant Crazyhouse Above World Champion Level With Deep Neural Networks and Human Data. Frontiers in Artificial Intelligence » CrazyAra

Forum Posts

- Re: Insanity... or Tal style? by Miguel A. Ballicora, CCC, April 02, 2009 » Gaviota

- Re: How Do You Automatically Tune Your Evaluation Tables by Álvaro Begué, CCC, January 08, 2014

- The texel evaluation function optimization algorithm by Peter Österlund, CCC, January 31, 2014 » Texel's Tuning Method

- SL vs RL by Chris Whittington, CCC, April 28, 2019

External Links

- Supervised learning from Wikipedia

- Category: Supervised learning - Scholarpedia

- Boosting (machine learning) from Wikipedia

- Computational learning theory from Wikipedia

- Support vector machine from Wikipedia

References

- ↑ Supervised learning from Wikipedia

- ↑ A data flow diagram shows the machine learning process in summary, by EpochFail, November 15, 2015, Wikimedia Commons

- ↑ Tony Marsland (1985). Evaluation-Function Factors. ICCA Journal, Vol. 8, No. 2, pdf

- ↑ No free lunch in search and optimization - Wikipedia

- ↑ Occam's razor from Wikipedia